Manual Moderation Failing at Scale

A fast-growing social video platform processing 1.8 million daily uploads across 9 language markets was drowning in content moderation backlog. A team of 140 human moderators reviewed flagged content using basic keyword filters and user reports — but the average review turnaround had stretched to 72 hours, allowing harmful content to accumulate thousands of views before removal.

Regulatory pressure was mounting with the EU Digital Services Act (DSA) requiring platforms to demonstrate "systemic risk mitigation" and provide transparent moderation reporting. The platform faced potential fines of up to 6% of annual revenue for non-compliance, while creator satisfaction scores had dropped to 3.1/5 due to inconsistent enforcement and wrongful content takedowns affecting 23% of reviewed posts.

Core Roadblocks:

- 72-Hour Review Backlog: With 1.8M daily uploads and only 140 moderators, the queue consistently exceeded 380,000 items. Harmful content — hate speech, graphic violence, misinformation — remained live for an average of 72 hours before review, accumulating 12,000+ average views per violation.

- 23% False Positive Rate: Crude keyword-based filters flagged legitimate content as violations — particularly affecting creators posting in non-English languages where cultural context was lost. Each wrongful takedown triggered a manual appeal process averaging 5 business days, eroding creator trust.

- 61% Violation Detection Rate: The platform only caught 61% of actual policy violations through its combination of automated keyword filters and user reports. Sophisticated violations — context-dependent hate speech, manipulated media, subtle misinformation — consistently evaded detection.

The myAiLabs Ecosystem

myAiLabs deployed its full suite of AI Agents to replace the platform's overwhelmed manual moderation with an intelligent, multi-modal content safety pipeline. Each agent addressed a critical gap — from real-time video/audio/text analysis to regulatory compliance reporting and creator appeal management.

The Multi-Modal Content Safety Engine

The AI-powered content safety engine fundamentally transformed how the platform protects its community. Every upload — video, audio, thumbnail, caption, and comments — now passes through a multi-modal classification pipeline that analyzes visual frames at 5fps, transcribes and screens spoken content, performs OCR on embedded text, and evaluates contextual signals across 14 policy categories simultaneously.

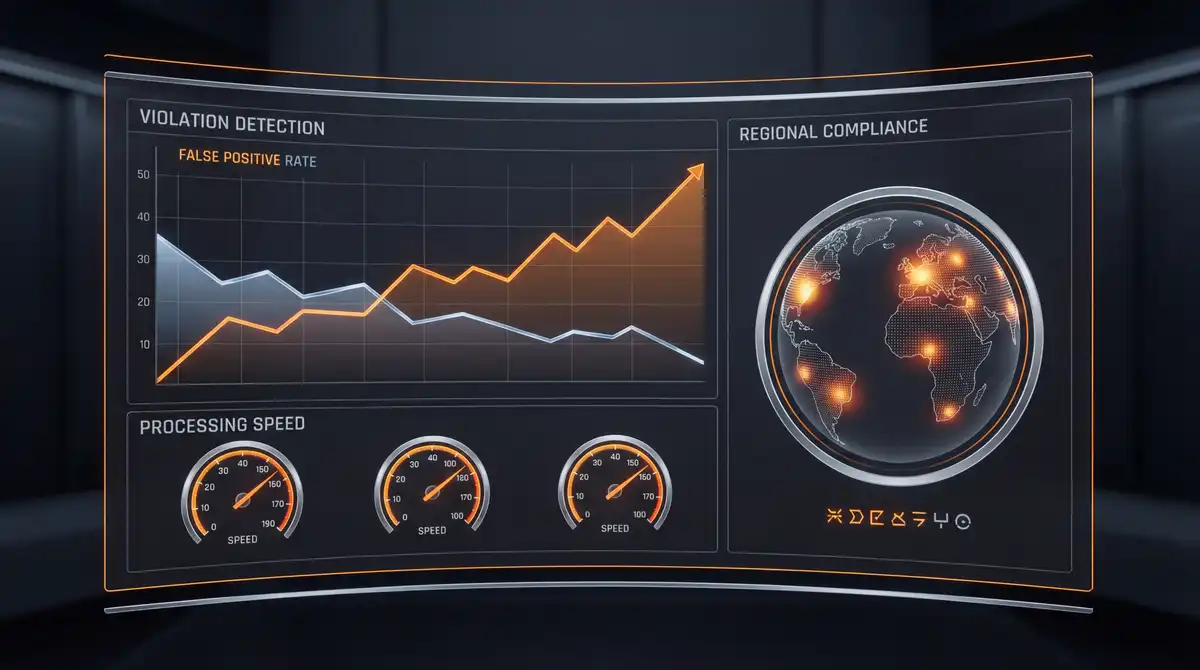

Content that previously sat in a 72-hour review queue is now classified within minutes of upload. High-confidence violations (scoring above 0.92 on the model's probability threshold) are automatically actioned — removed, age-gated, or demonetized — while borderline cases are routed to specialized human reviewers with AI-generated context summaries that reduce per-review time from 4.5 minutes to 90 seconds. The result: the violation detection rate climbed from 61% to 94%, false positives dropped from 23% to 6.1%, and the moderation team was freed to focus on complex edge cases and emerging abuse patterns rather than processing a never-ending queue.

Metrics That Matter

The myAiLabs Agentic ecosystem delivered measurable impact across platform safety, operational efficiency, and regulatory compliance within 6 months of deployment.

Faster Moderation

Average review turnaround reduced from 72 hours to under 4 hours through automated multi-modal classification at upload.

Fewer False Positives

False positive rate dropped from 23% to 6.1% through context-aware multi-language classification with monthly bias auditing.

Detection Accuracy

Policy violation detection improved from 61% to 94% across 14 categories and 9 languages through multi-modal AI analysis.